You can select which large language model you’d like to power Chat. At this time there’s no option to select the Autocomplete model as we’ve optimized a custom model for low latency specifically for this feature.Documentation Index

Fetch the complete documentation index at: https://docs.double.bot/llms.txt

Use this file to discover all available pages before exploring further.

Claude 3.7 Sonnet

Anthropic’s newest and most capable LLM, released on Feb 24, 2025 as the third generation of

Sonnet.

Claude 3.5 Sonnet (2024-10-22)

Anthropic’s second iteration of Claude 3 Sonnet, released on Oct 22, 2024.

Claude 3 (Opus)

Anthropic’s original Opus model.

DeepSeek R1

DeepSeek’s reasoning model.

DeepSeek V3

DeepSeek’s latest base model.

OpenAI o1-mini

OpenAI’s highest performance model for coding and math tasks. Despite the name, this model is

both faster and stronger than o1-preview on coding and math tasks!

OpenAI o1-preview

OpenAI’s newest reasoning model designed to solve problems across generalist domains.

gpt-4o-2024-09-03

OpenAI’s newest GPT-4o checkpoint.

gpt-4o-2024-08-06

OpenAI’s 2024-08-06 checkpoint for GPT4o.

gpt-4o-2024-05-13

OpenAI’s 2024-05-13 checkpoint for GPT4o.

GPT-4 Turbo

OpenAI’s original GPT-4 Turbo.

Llama 3.1 405B

Meta’s largest model. Open Source.

Llama 3.1 70B

The successor to Llama 3 70B.

Llama 3.1 8B

A small but fast Llama model.

Mistral Large 2

Mistral’s newest and most capable LLM released on 7/24.

Coming Soon (Click here to get notified)

GPT-5

OpenAI’s anticipated successor to GPT-4 and most capable coding LLM, available for early access on Double later this year.

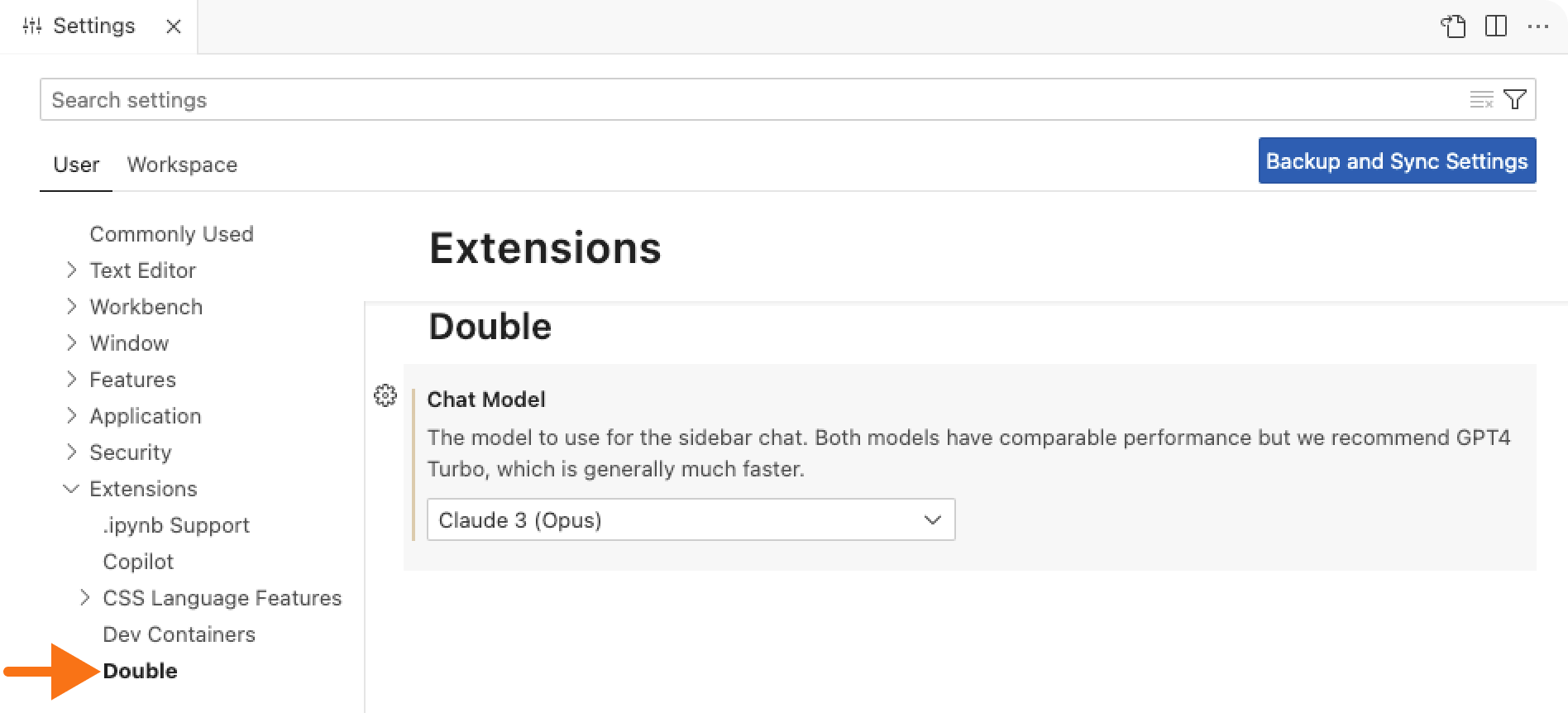

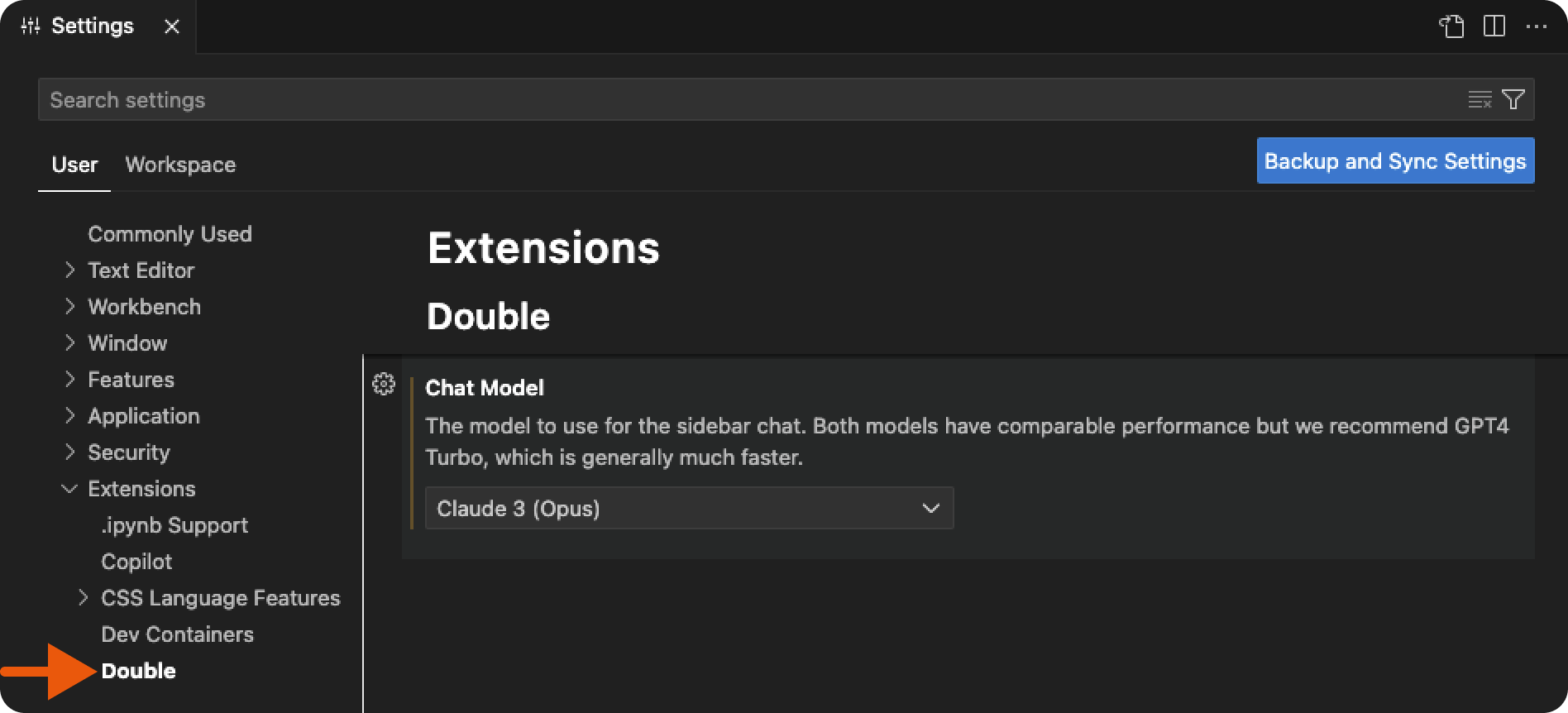

Selecting a Model

To change what model Double uses, go to the VS Code settings (Cmd + , or Ctrl + ,), expand the Extensions dropdown on the left side of the screen, and select Double. Here you’ll find a dropdown with all of the available models.